🌐 Do AI models actually understand GPS coordinates?

PLUS: Tracking land use changes with foundation models, and more.

Hey guys, here’s this week’s edition of the Spatial Edge — a newsletter that’s as frequent as a Marvel movie. In any case, the aim is to make you a better geospatial data scientist in less than five minutes a week.

In today’s newsletter:

Spatial AI: LLMs struggle with pure GPS math.

Change Detection: Foundation model tracks complex landscape changes.

War Mapping: Satellites track destruction in conflict zones.

Urban Shade: High-resolution dataset maps global pedestrian shade.

Image Alignment: Massive dataset of aligned satellite pairs.

Research you should know about

1. Do AI models actually understand GPS coordinates?

Large Language Models (LLMs) are increasingly being built into navigation apps and geographical tools, but their ability to actually understand and manipulate GPS coordinates has remained a bit of a mystery. Most spatial AI tests involve asking a model to describe where an object is in a photo of a living room, which doesn’t really translate to calculating the distance between Tokyo and Sydney. To bridge this gap, researchers built GPSBENCH, a new dataset designed to test whether LLMs genuinely understand the Earth’s coordinate system or if they are just bluffing.

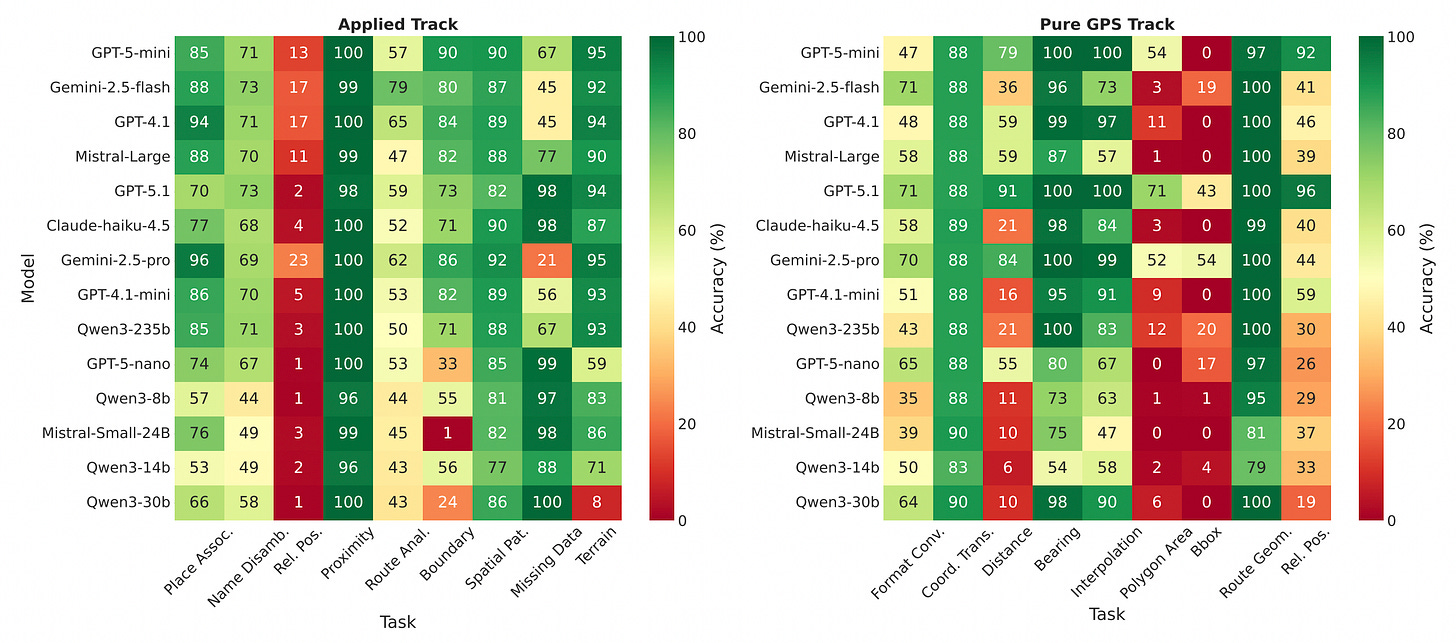

The researchers tested 14 top-tier LLMs across two main tracks: ‘Pure GPS’ (geometric math like calculating distances or bearings) and ‘Applied’ (using world knowledge, like identifying which city sits at a specific coordinate). The results show a pretty stark divide. Most models are actually much better at the ‘Applied’ track, using their vast pre-trained world knowledge to guess locations, but they completely fall apart when asked to do the pure spherical geometry required for the ‘Pure GPS’ track. For instance, tasks like calculating the area of a polygon on the globe had a near 0 per cent success rate across the board, proving that the models haven’t actually learned geodetic formulas.

Interestingly, the study also revealed that LLMs have a highly hierarchical understanding of geography. They can almost always identify the correct country for a given GPS coordinate, but their accuracy drops off a cliff when asked to pinpoint the specific city. However, when the researchers added random ‘noise’ to the coordinates, the models’ performance didn’t drop, which proves they aren’t just memorising coordinates from databases but do have a genuine, albeit fuzzy, spatial understanding. Ultimately, this shows that if you want an AI to perform precise geographic reasoning, you still need to augment it with external calculators or specific GPS tools, because right now, their internal compass is a bit broken.

You can access the data and code here.

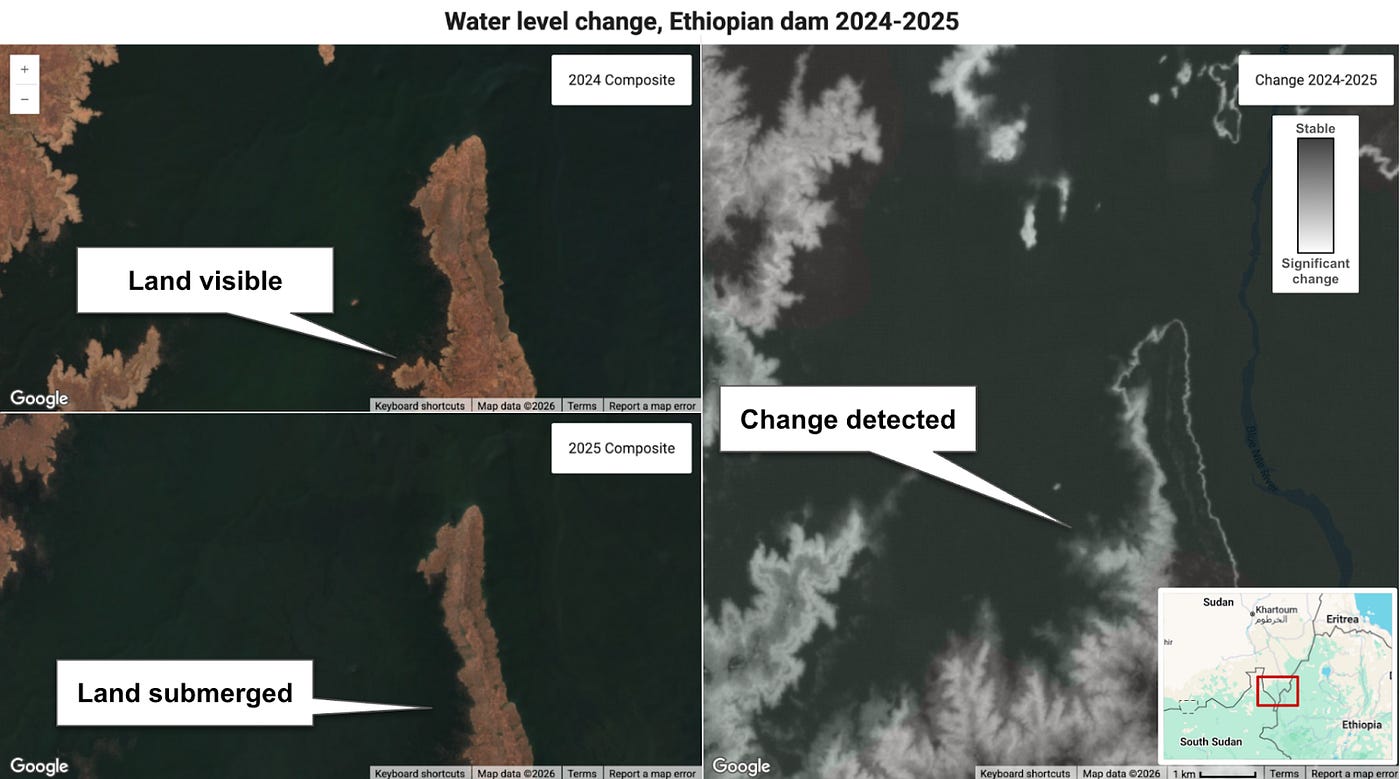

2. A new foundation model approach to tracking landscape changes

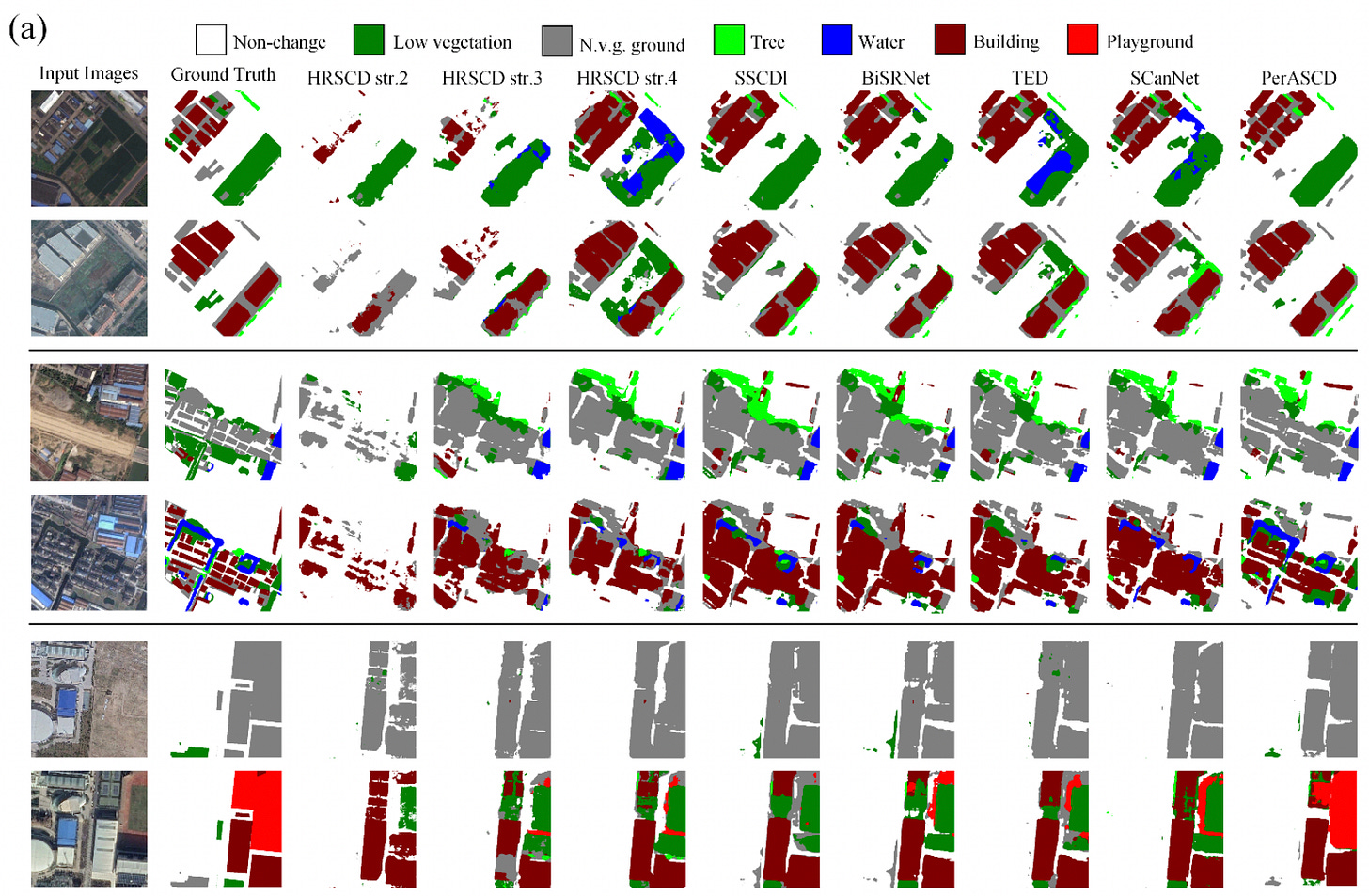

Tracking changes on the Earth’s surface usually relies on binary change detection, which simply spots where a landscape has been altered. Semantic change detection takes this a step further by identifying exactly what those changes are, such as a dense forest being replaced by a paved car park. While incredibly useful for urban planning and disaster relief, building models for this task is notoriously difficult. Most current systems rely on standard visual encoders trained on everyday photos, leaving them poorly equipped to understand the complex, top-down perspective of satellite imagery. Furthermore, because the vast majority of land remains unchanged between satellite passes, the models often get overwhelmed by the sheer imbalance of data and resort to lazy predictions.

A new paper has introduced PerASCD, a new framework driven by a massive remote sensing foundation model. By leveraging an AI that has been explicitly pre-trained on vast amounts of satellite data, the system possesses a much deeper, native understanding of geospatial features. The team paired this foundation model with a custom-built Cascaded Gated Decoder. Older methods typically forced the AI through highly convoluted, multi-branch pathways to separate the before and after semantics. This new decoder streamlines the entire process, handling feature compression, multi-level fusion, and upsampling in a single modular pipeline, which drastically simplifies the overall architecture.

The researchers also patched a frustrating mathematical glitch that has plagued previous models. Older systems used a rigid loss function to force the AI to separate changed and unchanged pixels, but the harsh mathematical boundaries of this rule would often cause the model’s accuracy to suddenly collapse during training. By introducing a softened version of this mathematical rule, the team smoothed out the learning gradients and eliminated the numerical instability. When tested on major benchmark datasets like SECOND and LandsatSCD, the framework achieved state-of-the-art performance, delivering significantly sharper boundaries and fewer false positives than previous methods.

3. Mapping war zones with combined satellite data

The ongoing war in Ukraine has caused immense damage to infrastructure, agriculture and the broader environment. Obviously, sending survey teams into active conflict zones to assess this wreckage is incredibly dangerous and often impossible. To get around this, a new study from Scientific Reports have developed a new method to automatically map the destruction from space using freely available satellite data. By combining radar data from Sentinel-1 with optical imagery from Sentinel-2, they have created a system that monitors conflict-related changes without putting anyone in harm’s way.

The interesting part of this approach is how it adapts to different types of terrain. The algorithm automatically classifies the land cover (separating urban environments from rural or agricultural areas) and then chooses the best analysis strategy for that specific zone. The researchers combined radar-based change detection with optical image classification to spot destruction that single-sensor methods might miss. They also added a ‘context-aware smoothing’ technique, which essentially filters out visual noise and local glitches to produce a much cleaner and more reliable map.

When put to the test, the results were pretty impressive. The team compared their automated findings against the official UNOSAT database and found that their tool successfully detected over 80 per cent of damaged buildings. Furthermore, their smoothing technique proved to be far more accurate at detecting built-up areas than existing global land-cover products like AlphaEarth. Ultimately, this gives humanitarian organisations a rapid and reliable way to pinpoint destruction in restricted regions.

Geospatial Datasets

1. Urban pedestrian shade mapping dataset

This dataset provides high-resolution simulations of pedestrian shade provision across nine geographically diverse global cities, including Amsterdam, Sydney, and Rio de Janeiro. You can access the data here and the code here.

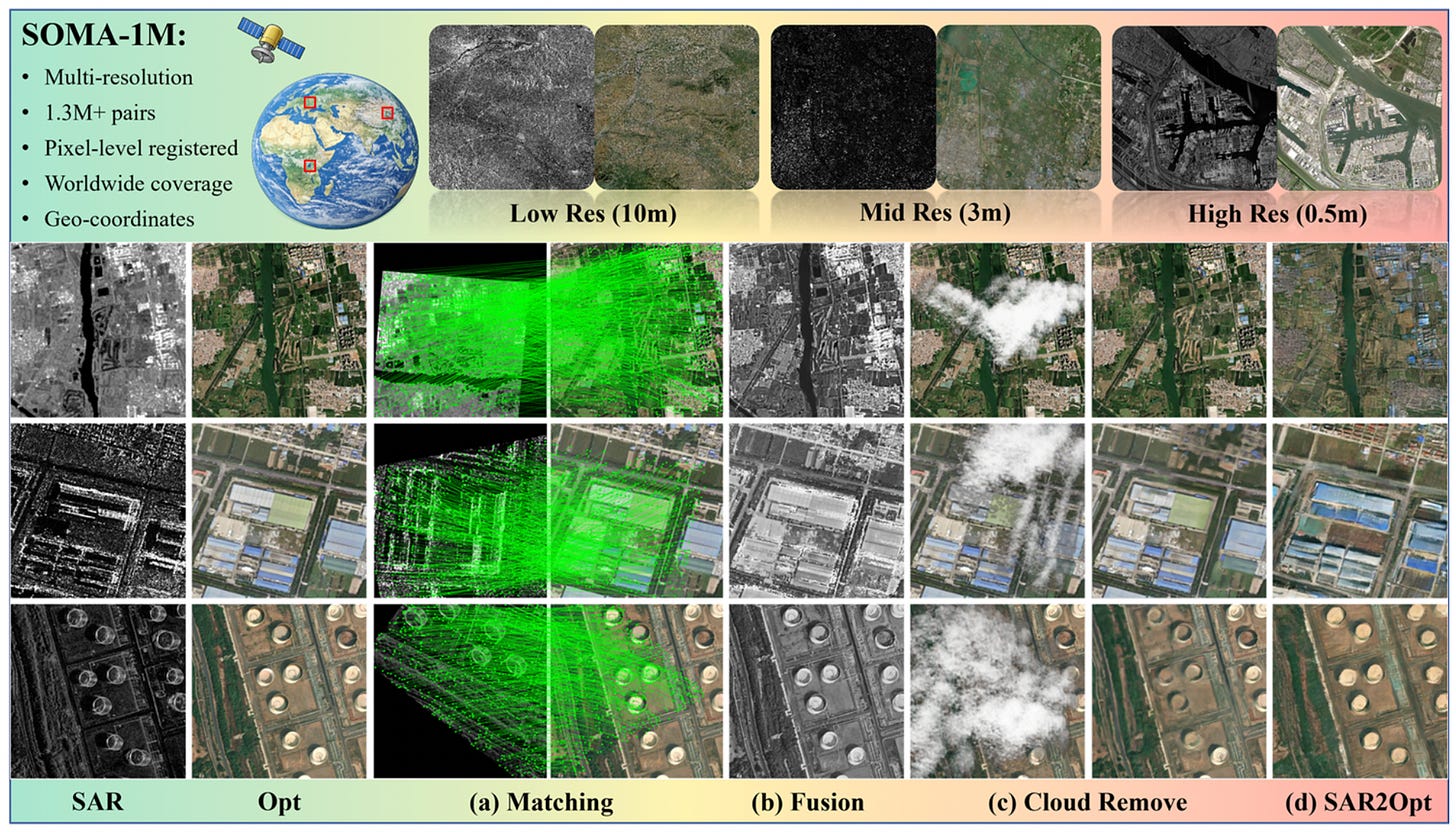

2. SAR-Optical image alignment dataset

The SOMA-1M dataset offers a massive collection of over 1.3 million precisely aligned Synthetic Aperture Radar (SAR) and optical image pairs. The dataset will be publicly released here.

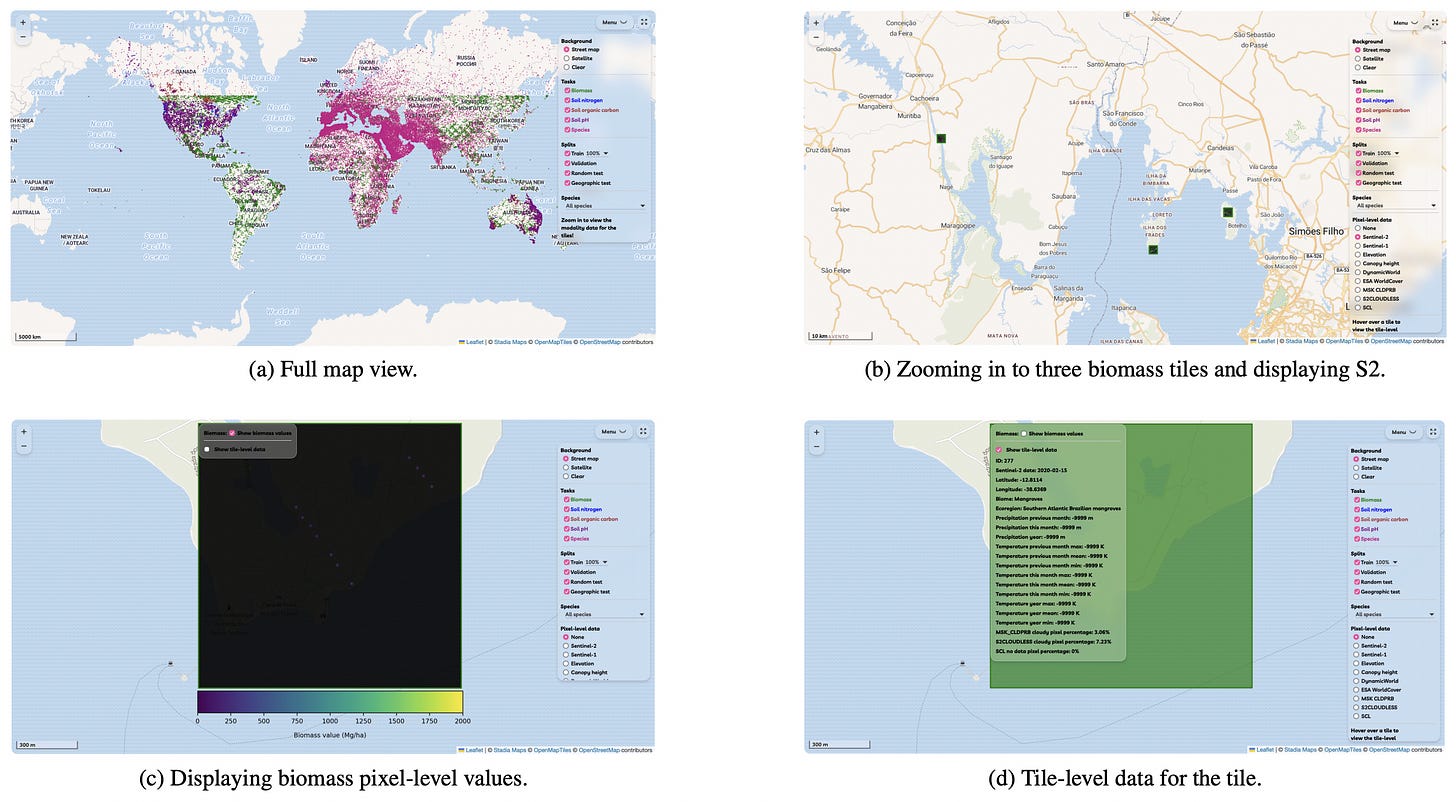

3. Multimodal environmental monitoring dataset

The newly introduced MMEARTH-BENCH is a collection of five environmental monitoring tasks designed to evaluate geospatial machine learning models on a global scale. It features 12 diverse data modalities and includes geographic test splits to specifically challenge how well algorithms adapt to unseen regions. You can access the dataset, code, and read more about the project here.

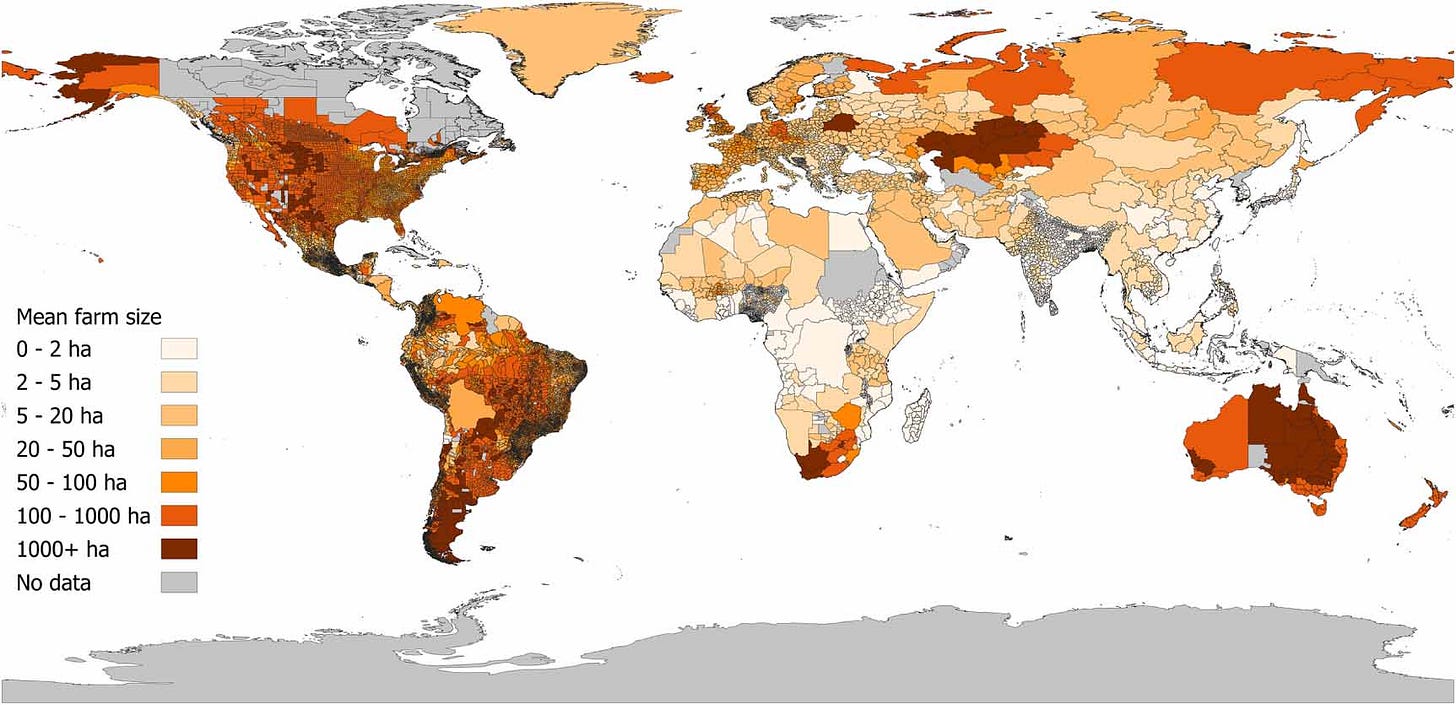

4. Global subnational farm size dataset

This dataset provides the most comprehensive and spatially detailed empirical assessment of global mean farm size to date, targeting the year 2000. Unlike previous products that rely on national averages or modelled data, this collection compiles census and survey information at the smallest available subnational administrative level for 101 countries, alongside national data for 99 others. You can access the data and code here.

Other useful bits

The team behind AlphaEarth Foundations has just rolled out their 2025 Satellite Embedding dataset, cleverly packing an entire year's worth of satellite data into neat 10-metre pixels. This update makes it easier than ever to spot global shifts without the headache of processing raw imagery.

Google DeepMind has showcased a new realistic city planner app powered by Gemini 3.1 Pro. It tackles complex terrain and simulates traffic to generate incredibly high-quality visualisations of future urban infrastructure.

A new time series foundation model called TimesFM has just been released, which has been pre-trained with 100 billion data points. It’s showing pretty impressive performance across a whole host of domains, making it a useful resource for anyone keen to dive into machine learning and forecasting.

Jobs

Mapbox is looking for a remote Software Development Engineer based in either Germany or Finland.

The European Centre for Medium-Range Weather Forecasts (ECMWF) is looking for a (1) Data Management Engineer and (2) Climate Data and AI/Machine Learning Scientist, both based in either Bonn, Germany or Reading, UK.

The European Organisation for Nuclear Research (CERN) is looking for a GIS Specialist and Developer based in Geneva.

Just for Fun

The Artemis II crew ran into a very Earthly problem whilst en route to the Moon. Just hours into their ten-day flyby, the astronauts had to radio mission control for IT support after their Microsoft Outlook crashed. It’s comforting to know that no one is safe from an email glitch.

That’s it for this week.

I’m always keen to hear from you, so please let me know if you have:

new geospatial datasets

newly published papers

geospatial job opportunities

and I’ll do my best to showcase them here.

Yohan

I love this